- Published on

AGQ: Survival Skills in the Age of AI

- Authors

- Name

- Luke Bechtel

- @linkbechtel

“When AGI comes, what will the role of humans be?”

1

"Setting"

Why

This

Matters

![[object Object]](/static/images/agq/chat-gpt-adoption-rate.png)

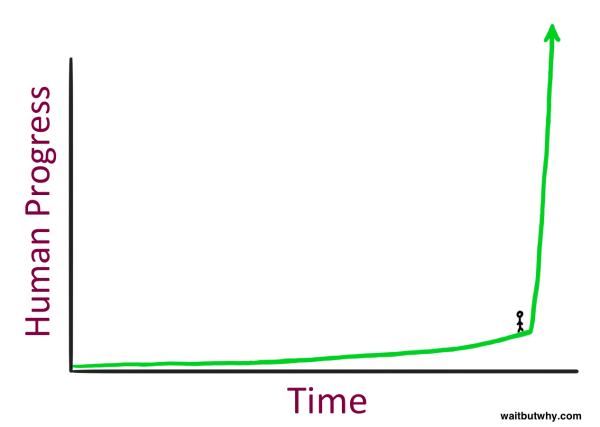

AI Progress is now measured in days, not years.

Artificial General Intelligence (AGI) is either here (by some accounts) or coming very soon.

Love it, or hate it; ignore it, or shun it, or embrace it:

The Age of AI is upon us, yet nobody seems totally ready for it.

There are many forces which are set to play a part in the coming times. Politics, economics, sociology, information technology, and others — yet AGI manages to be simultaneously the most unknown element, and the most transformative one.

I have a growing mixture of concerns & hope about where I and my loved ones may fit in the new shape of the world when this transformation is complete.

Perhaps you feel the same way.

So let’s talk about it.

What is AGQ ?

It’s a term I made up, that stands for “

Am I authorized to make a term?

Nope! Sorry 🤗

(We’ll unpack this in detail, so don’t be concerned if there are some unfamiliar terms)

Just as EQ and IQ are different (hard-to-measure accurately) facets of the idea of "G" -- or "General intelligence" -- "

Why give this a new term?

The terms we have right now aren't quite enough.

IQ isn’t quite enough — because it is focused primarily on pattern recognition, and makes no statements about an individual’s “theory of mind”.

And EQ isn’t quite enough — because while it does cover “theory of mind”, it has focused primarily on human minds, which will soon lose their monopoly on societal participation.

As we push (or are pushed) into this brave new world, we should have some concept of how to behave within it. When self-improving, it’s important to have a target.

Hence,

Mo’ Minds, Mo’ Problems

Humans are all different.

This has presented us with opportunities and challenges for thousands of years.

However, compared to AI, humans are incredibly similar.

Pick any two humans who seem different from each-other — two political enemies, or two people from vastly different walks-of-life. The differences between these human minds may seem stark. But the differences between these two humans would seem miniscule, if you weighed them against the differences between either human and an AI system.

Not only will AI minds be different from our own — AI minds will be mutually diverse, too. There will be many different kinds of AI minds, with different strengths and weaknesses. Some of the AI minds might even become more distinct from each-other than they are from us!

But these differences don’t mean we should just give up hope on understanding AI systems.

There are similarities, however small, between how AI systems perceive the world, and how we do. They were designed in our image, after all. Further, when you study AI systems, you begin to realize that there are common “subsystems” which all intelligent beings must have — human or not.

Thus despite our major differences, relating to AI will in some ways resemble how we relate to other humans — it’s a matter of understanding where we are alike, and where we differ.

In the Age of AI, we’ll have to get comfortable interacting with all these types of minds, woven together in complex networks of multi-agent systems.

We'll all need to develop a strong “Theory of Mind”, and learn how to apply the Scientific Method

Given this, the primary areas that I propose AGQ covers are as follows:

The former list can be broken down even further as:

To really cover this ground, and to do it well — we’ll need to explore a little bit of how our minds work, how AI minds work, how groups work, and how the world works.

We'll of course need to study State-of-the-Art AI systems, both to understand "who we're creating", but also as a means of understanding more about ourselves. (& also because they're cool 🤓)

We'll need to learn some things about Psychology, both in individuals and in groups. We'll need to touch on topics around Cultural Development, Game Theory, & Decision Theory.

Taking all of the acquired knowledge and stretching it to the limit, we'll need to zoom out and look at the Social & Macroeconomic landscape -- to predict how the developments in AI will fit within the world we inhabit today, and exactly how much they'll change it.

Easy, right? 😉

I won’t claim that I’ll be able to do all of these justice, but at least it'll be a fun conversation.

If you want to follow along — subscribe to my newsletter below to be notified when the next post goes live (should be in a day or two.)

— Luke

WAIT

If you stayed to the end you're probably cool and I'd like to connect.

Please comment or subscribe below, follow me on twitter, or reach out through one of the other social links below.

A L S O... -- I'm currently looking for engaging work around LLMs & ML Systems.

Check out my projects, my twitter, my linkedin, or my github to see what I'm into.

Reach out :)

Stay curious